Today is Census day in the England and Wales1. Happening every ten years, the census provides a snapshot of households across the country to help shape funding decisions and plan for future needs (schools/public services etc).

Continue reading Census 2021 and data protectionTag: Data

Data visualisation – did you see what you think you saw?

There are a lot of people interested in data right now and there are a lot of visualisations to make that data easier to consume for people who are not data scientists. However, like any branch of statistics, visualisations can easily mislead. We are programmed to see patterns. If we are presented with a graphic that supports the surrounding text then we are more likely to believe the argument presented without further research1. I wrote about this on the Royal Statistical Society Data Science Section Blog in May, where reversing the colours in successive graphics can cause confusion. I’ve seen further examples and one caught my eye this month because it was being called out.

Continue reading Data visualisation – did you see what you think you saw?Remote Data Science – Interview

Last week I was interviewed by Keith Robinson of Ammonite Data, with a topic of managing data science teams remotely and all the challenges this brings. We had a much more wide ranging conversation where I looked at challenges of communication and even the impact on models that the current extraordinary events will have.

I hope you find these enjoyable and helpful.

Data: access and ethics

Last week I attended two events back to back discussing all things data, but from different angles. The first, Open Data, hosted by the Economist was an event looking at how businesses want to use data and the ethical (legal) means that they can acquire it. The second was a round table discussion of practitioners that I chaired hosted by Ammonite Data, where we mainly focussed on the need for compliance and balancing protection of personal data with the access that our companies need in order to do business effectively.

We’re in a world driven by data. If you don’t have data then you can’t compete. While individuals are getting more protective over their data and understanding its value, businesses are increasingly wanting access to more and more – at what point does legitimate interest or consumer need cross the line?

Continue reading Data: access and ethicsA year of Apple Watch addiction and motivation

Early 2017 I got an Apple Watch. I wasn’t fussed about them at the time as I never normally wear a watch of any sort. But when my husband didn’t want his any more, I thought I’d give it a go. A few months later and I was addicted. While I used the word lightly at the time, what really worked for me were the regular achievements and challenges. It was the same thing that got me hooked into World of Warcraft many years ago1 and I know that if I do something, I throw everything at it, but once I can’t complete a challenge I usually drop something. After my initial post about the watch I found myself in a situation where I couldn’t achieve the challenges. Towards the end of 2017 I had a few too many days in front of the computer with work and just wasn’t active. What I noticed was that as soon as I missed a day of activity, and thus I couldn’t get a “perfect month” achievement, I stopped even trying to be active until the start of the next month. If there was no reward, even a completely irrelevant badge in an app, then why try… Long term health benefits don’t give the same level of accomplishment in the short term for most people, myself included. So after a particularly gluttonous December 2017 I made myself a promise. Continue reading A year of Apple Watch addiction and motivation

Cambridge Analytica: not AI’s ethics awakening

By now, the majority of people who keep up with the news will have heard of Cambridge Analytica, the whistle blower Christopher Wylie, and the news surrounding the harvesting of Facebook data and micro targeting, along with accusations of potentially illegal activity. In amongst all of this news I’ve also seen articles that this is the “awakening ” moment for ethics and morals AI and data science in general. The point where practitioners realise the impact of their work.

“Now I am become Death, the destroyer of worlds”, Oppenheimer

Continue reading Cambridge Analytica: not AI’s ethics awakening

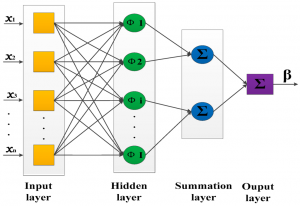

Algorithmic transparency – is it even possible?

The Science and Technology Select Committee here in the UK have launched an inquiry into the use of algorithms in public and business decision making and are asking for written evidence on a number of topics. One of these topics is best-practise in algorithmic decision making and one of the specific points they highlight is whether this can be done in a ‘transparent’ or ‘accountable’ way1. If there was such transparency then the decisions made could be understood and challenged.

It’s an interesting idea. On the surface, it seems reasonable that we should understand the decisions to verify and trust the algorithms, but the practicality of this is where the problem lies. Continue reading Algorithmic transparency – is it even possible?